Our collaborative club projects with UK water companies have provided valuable insight into how analytics is successfully developed and adopted in operational environments. These projects are built on a simple principle: the most effective analytics is created in partnership with the teams who rely on it.

Working across multiple organisations allows challenges to be examined from different regulatory, operational, and cultural perspectives. It reinforces our belief that analytics rarely succeeds in isolation. Instead, it evolves through collaboration, shared learning, and open challenge.

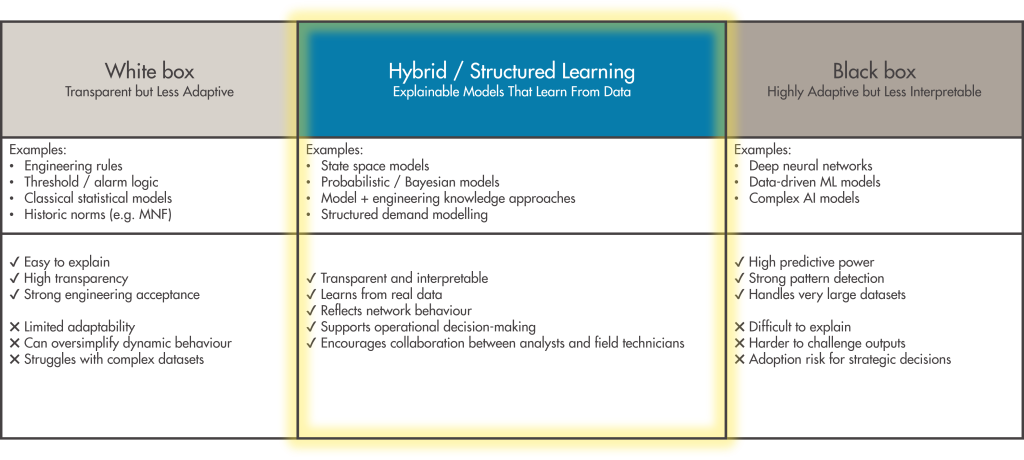

One consistent lesson has been that technical performance alone does not guarantee adoption. Models must behave in ways that practitioners recognise as realistic. When outputs align with engineering intuition and respond logically to real-world events, confidence grows rapidly. When they do not, even technically impressive models can struggle to gain traction.

The Paradigm club project demonstrated this clearly. By modelling expected customer demand behaviour within District Metered Areas (DMAs), Paradigm helped shift leakage analysis away from reliance on historic norms and minimum night flow indicators. Instead, it introduced a structured understanding of how each DMA should perform across a full 24-hour cycle across the year.

One of the most interesting observations during Paradigm’s adoption has been how analysts with different levels of experience interact with analytical models.

Among experienced analysts, Paradigm is often used as a tool to challenge their own understanding of network behaviour. These analysts bring deep operational knowledge and use the model as a second perspective. In many cases, this leads to valuable discussions between analysts and field teams — sometimes validating traditional understanding but occasionally highlighting where long-held assumptions about network behaviour may not fully reflect reality. In these situations, the model strengthens engineering judgement rather than replacing it.

At the other end of the spectrum, we have seen less experienced analysts become highly reliant on model outputs. While this demonstrates strong confidence in the analytics, it can sometimes reduce critical challenge. When models are followed without question, opportunities to identify data issues or model limitations can be missed. Ironically, this can slow the improvement process that makes these tools more effective.

This contrast has reinforced an important principle for us: the best analytical tools are not those that replace human expertise, but those that encourage constructive challenge. Models improve when they are questioned. Organisations gain the greatest value when analytics and operational experience strengthen each other.

Building on these lessons, the Dynamo club project is applying the same collaborative philosophy to pressure management. As pressure control schemes and monitoring infrastructure increase in scale and complexity, distinguishing genuine network opportunities from data or asset issues becomes more prominent and challenging. Dynamo will focus on providing structured insight that supports both area level optimisation and strategic pressure management decisions.

Across all of our collaborative work, two enabling themes consistently emerge: trusted data foundations and investment in skills development. Reliable analytics depends on validated, connected datasets that provide a consistent view of network performance. Equally, models deliver greatest value when organisations invest in shared understanding and training that supports confident and consistent use.